Adverse Event Monitoring on Social Media: What Pharma Teams Need to Know

Disclaimer: Datashake provides data and enrichment infrastructure. All adverse event identification, causality assessment, and regulatory reporting must be conducted by qualified pharmacovigilance professionals. Nothing in this article constitutes medical or regulatory advice.

In 1997, a man living with HIV in the United States started posting about a strange change in his abdomen after starting the antiretroviral Crixivan (indinavir). His belly button had gone from an "inny to an outy." He didn't file a MedWatch report. He didn't call his doctor. He posted to a patient internet forum – a prototype discussion board that other patients had started calling "Crix Belly."

Within weeks, other HIV patients in the US and Europe were posting the same thing. The same belly, the same eerie change.

What those patients were documenting (before any formal case report, or clinical investigation) was what would later be identified as lipodystrophy syndrome: a serious metabolic side effect associated with early antiretroviral therapy. The condition eventually prompted label changes, clinical trials, and FDA-approved treatments. But the first signal came from a patient forum in 1997.

That was nearly 30 years ago. The platforms have changed – patient forums have become disease subreddits, Facebook groups, TikTok comment sections, YouTube video replies, condition-specific apps.

But the dynamic has not: patients report their experiences online, often before they report them anywhere else, often in language that looks nothing like an adverse event report, often on platforms that no one is systematically monitoring.

If you're in pharmacovigilance, compliance, or digital innovation at a pharma company, this one's for you. We'll cover the regulatory obligations, the evidence for and against social media monitoring, the technical challenges that trip teams up, and what a genuinely compliant workflow looks like.

Let’s dive right in ⬇️

Why the system you rely on captures only a fraction of what's happening

A systematic review across 37 studies found that the median underreporting rate for adverse drug reactions to spontaneous reporting systems was 94%, with an interquartile range of 82–98%. A separate review puts the figure blunter still: only an estimated 5–10% of adverse drug reactions are ever formally reported.

The FDA's own documentation is clear: because healthcare professionals, patients, and consumers are not required to report adverse events, FAERS data does not contain all instances of a particular adverse event. And neither the prevalence nor incidence of an adverse event can be calculated from FAERS data alone.

Where the missing signal goes

Patients who don't file a Yellow Card or fill out a MedWatch form don't just go silent, they talk to each other.

Research consistently shows that many patients are more likely to share their symptoms with strangers on social media before alerting healthcare professionals. Social media provides a level of anonymity that clinical channels don't – and patients will often disclose more detailed accounts there than they would to their doctors.

Clinical trials add another layer to the problem. The limited number of subjects and controlled study conditions make it structurally difficult to detect rare adverse drug reactions. You need approximately 10,000 subjects to detect a very rare adverse event with a frequency of less than 1 in 10,000 individuals. Clinical trials also don't reflect real-world comorbidities, drug-drug interactions, or the full demographic range of people who will eventually be prescribed a medication.

That’s precisely why post-marketing surveillance exists. Social media is increasingly where that real-world experience lives.

But there's a mindset shift underneath all of this.

The traditional pharmacovigilance model was built on an assumption about patient behaviour: patients experience a reaction, they tell their doctor, their doctor reports it through official channels, and the signal reaches the safety database. The system was designed around the expectation that patients would come to you.

That assumption no longer holds, and hasn't for some time. Patients today go where they're comfortable, where they feel heard, and where they can find others going through the same thing. That's a disease subreddit. A Facebook group. A TikTok comment thread. A patient forum.

The companies building effective post-market safety programs aren't waiting for patients to find the Yellow Card form. They're meeting patients where the conversation is already happening – with the infrastructure to listen to it systematically, not just react to it when it surfaces in a news article.

When social media generates the hypothesis, not just the report

The most current example of this dynamic is the GLP-1 class.

The surge in popularity of semaglutide was accompanied by a wave of unsolicited patient reports (shared on Reddit, TikTok, and health forums) of unexpected reductions in alcohol use and other addictive behaviours during treatment. With clinical trials only recently underway at the time, those anecdotal reports via social media were a primary reason for scientific interest in GLP-1 receptor agonists' effects on alcohol use.

Social media here wasn't just reporting a known side effect. It was surfacing a signal that preceded clinical investigation and drove scientific inquiry. That's a different kind of value than traditional PV is designed to capture, and it's one that only exists if someone is watching.

You're already obligated to monitor. Here's what that means in practice

Before getting into the practical and technical questions, it's worth being clear about where the regulatory obligation stands. Because there's still genuine uncertainty in some teams about what is actually required.

In the EU: explicit and specific

The EMA's Good Pharmacovigilance Practices (GVP) framework is unambiguous. Under GVP Module VI (Rev 2), marketing authorisation holders are required to regularly screen the internet or digital media under their management or responsibility for potential reports of suspected adverse reactions.

The definition of "digital media" in the guidance is broad: it includes websites, web pages, blogs, vlogs, social networks, internet forums, chat rooms, and health portals.

The obligation doesn't stop at company-owned channels. If a marketing authorisation holder becomes aware of a suspected adverse reaction report on a website or forum it doesn't manage, it is still expected to assess whether that report needs to be followed up and potentially reported.

At that point, the 15-day clock applies. Unsolicited cases of suspected adverse reactions from the internet or digital media are to be handled as spontaneous reports, with the same reporting time frames as other spontaneous reports applied. And that clock starts the moment any MAH personnel becomes aware of the minimum information required for a valid ICSR. That means pharmacovigilance awareness training matters across your entire organisation, not just the PV team.

Non-compliance carries real consequences: during GVP inspections, authorities focus heavily on ICSR management, and deficiencies can result in corrective and preventive actions, regulatory warnings, financial penalties, or in serious cases, suspension.

In the US: directional but less prescriptive

Under current ICH guidance reflected in FDA's framework, companies are not required to proactively monitor third-party sites for adverse events – but must address adverse events on third-party sites if they become aware of them.

In May 2025, the FDA issued a warning letter to Sprout Pharmaceuticals related to social media activity and noted explicitly that if a customer comments under a company post with a description of an adverse reaction, that triggers necessary reporting under 21 CFR Part 314. So a company with a social presence is not insulated from the obligation by not actively monitoring. If a company employee sees the comment, the clock has started.

Beyond compliance: the brand risk

The regulatory obligation is reason enough to monitor. But there's a second argument that doesn't depend on GVP at all.

What starts as a single TikTok video or Reddit thread about a drug reaction doesn't stay there. It gets shared, clipped, quoted in patient groups, picked up by health journalists, and amplified across platforms faster than any compliance team can respond to it.

➡️ Research confirms that pharma communicators expect to face more than three crises per year (far more than peers in other industries) and over 60% believe misinformation is a likely trigger.

Example

In November 2022, a fake Twitter account impersonating Eli Lilly posted that "insulin is free now." The tweet went viral. Lilly's stock fell over 4% that day, erasing billions in value, and took weeks to recover.

That was misinformation. But the same dynamic applies to authentic adverse event conversations – a cluster of patient posts about a serious reaction can seed a media cycle, attract plaintiffs' attorneys, and land a company on the front page of Bloomberg before the PV team has filed a single ICSR.

Proactive monitoring lets you know what's circulating before it becomes a crisis, and gives you the ability to act on it.

Summary

Regulators have signalled that further revisions to social media monitoring requirements are expected.

The appropriate response to that uncertainty is not to wait. It's to set up the infrastructure and workflows that allow systematic, documented monitoring with a clear audit trail.

So that when guidance firms up, you're already in a defensible position, and that a conversation gaining traction about your product doesn't catch your communications team off guard.

Social media can flag early safety signals – but only if you're looking in the right places

Social media can detect safety signals years ahead of regulatory action. But the platform matters enormously.

A scoping review published in JMIR Public Health Surveillance (2021) found that nine studies (64.2% of those reviewed) concluded that social media could detect safety signals between 3 months and 9 years before regulatory authorities took action.

The specific cases are striking:

- Statin-induced cognitive impairment (which led to FDA label changes in 2012) was detectable in web-based forum data as early as 9 years before the label change

- A significant relationship between bupropion and agitation was identified in forum data 7 years before FDA action

- Fluoxetine-induced suicidal thoughts: detectable 1 year in advance

- Simvastatin-induced kidney disease: 6 years in advance

- Lansoprazole-induced diarrhoea: 13 years in advance

But the most important finding in this body of research is the one that tends to get overlooked: 88.9% of the studies that found positive early detection results were using specialised healthcare social networks and disease-specific forums – not general social media platforms like Twitter or Facebook.

The WEB-RADR nuance

The EU's Innovative Medicines Initiative WEB-RADR project is a three-year public-private consortium involving the EMA, MHRA, Novartis, GlaxoSmithKline, AstraZeneca, and the Uppsala Monitoring Centre. It’s the most rigorous investigation of social media in pharmacovigilance conducted to date. But its headline finding is not what enthusiasts would want to hear.

The WEB-RADR consortium concluded that broad-ranging statistical signal detection in Twitter and Facebook, using currently available methods for adverse event recognition, performs poorly and cannot be recommended at the expense of other pharmacovigilance activities.

But WEB-RADR also found that there is genuine added value from social media monitoring for specific niche areas, particularly disease-specific forums and condition-focused communities. The consortium pointed to drug abuse and pregnancy-related outcomes as examples where social media data added meaningful signal beyond traditional sources.

This explains why the early detection evidence clusters where it does: the studies that worked used specialised forums. The studies that didn't work used generic social networks without contextual filtering or enrichment. ⚠️

The rare disease case: why patient forums are irreplaceable

For rare cancers, the case for patient forum monitoring is arguably strongest. Clinical research into rare diseases is scarce due to a combination of low funding, low pharmaceutical industry interest, and dispersed patient communities. Online forums can enable the kind of coordinated, trans-geographic patient reporting that would otherwise be impossible to aggregate.

Research analysing a GIST (gastrointestinal stromal tumour) patient forum for imatinib users found 214 novel adverse drug events not reported in the registration trials, with the most prevalent being muscle cramp, eye problems, depression, insomnia, and amnesia. The study also identified real-world data for long-term adverse events that post-market clinical studies had not captured, primarily because those events only appeared in patients who stayed on treatment for five years or more.

This points to a structural blind spot in clinical trials that goes beyond sample size. ⬇️

1️⃣ Trials end

2️⃣ Patients who reduce dosages due to side effects drop out

3️⃣ Rare conditions don't generate enough spontaneous reports to trigger conventional signal detection

4️⃣ A disease-specific forum aggregates a dispersed global population into a single, continuously updated data source – and that's a type of pharmacovigilance evidence that nothing else currently replicates.

What adverse event conversations look like in the wild

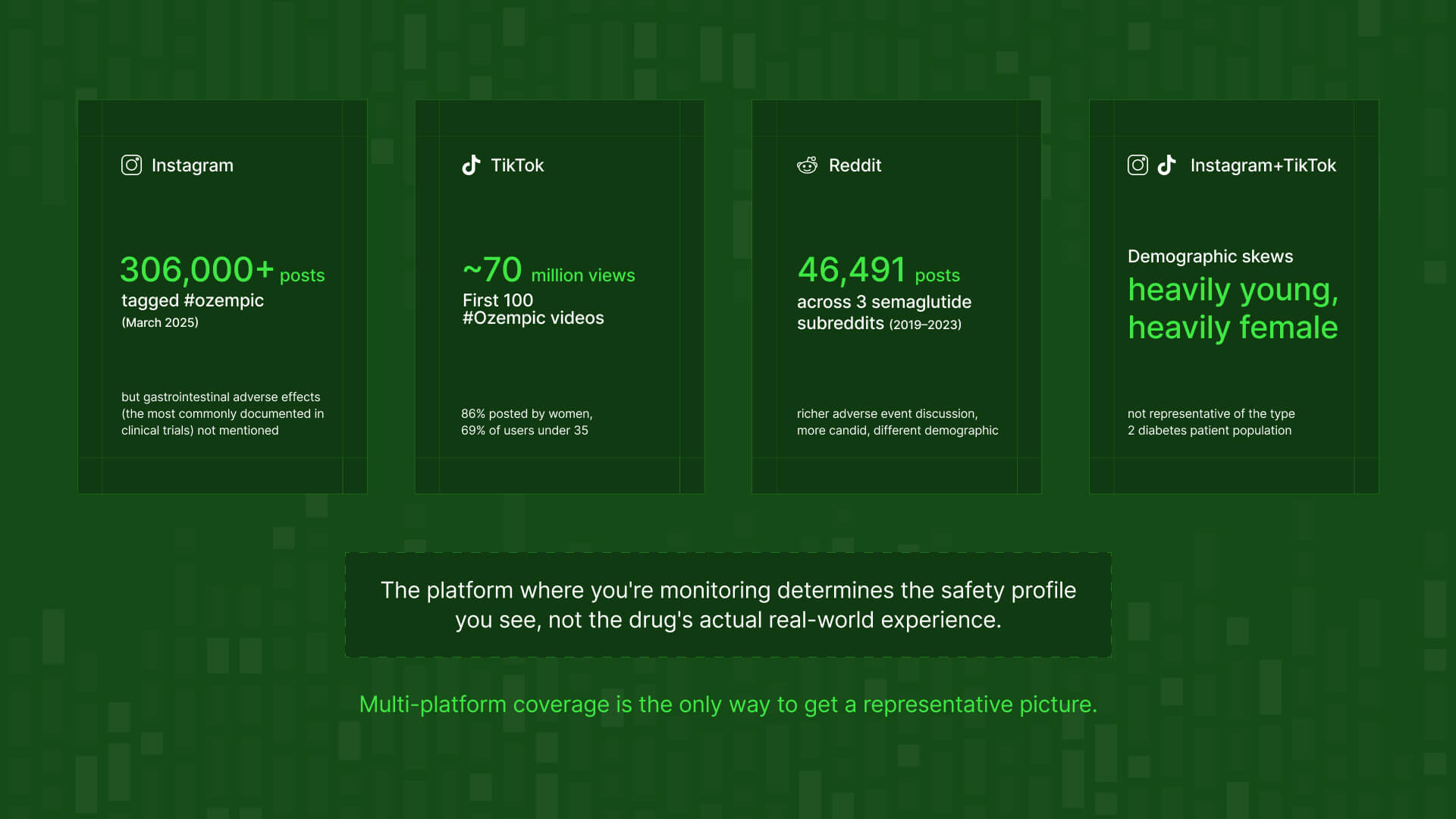

A TikTok video. A user documents a reaction to a GLP-1 drug on camera – nausea, hair loss, something unexpected. The first 100 TikTok videos tagged #Ozempic collectively garnered nearly 70 million views. By March 2025, there were over 306,000 Instagram posts with the hashtag #ozempic alone. The signal isn't in the title or description. It's in the comments, in the replies, buried in user-generated content that no keyword alert on a company dashboard would surface. The video itself may be fine; the comment thread that follows is where the adverse event conversation lives.

A Reddit thread. Someone posts in r/diabetes or r/antidepressants about a side effect they can't explain. The thread gets 200 replies within 48 hours. Multiple users corroborate the same experience, compare medications, discuss whether it's worth reporting. This is a rich, structured conversation about drug safety happening in real time – in a completely different register than anything a traditional PV system would process.

A YouTube comment section. A patient's video about managing a chronic condition gets 50,000 views. In the comments, someone describes an adverse reaction to the drug they're taking. It sits at comment #847. No social listening tool built for brand monitoring finds it unless it's specifically designed to crawl comment content at that depth.

floA patient forum post. A niche community for a rare cancer has 4,000 members. One post about a long-term side effect of a targeted therapy gathers 30 responses over two weeks – each one a detailed, first-person account. That’s the kind of data the GIST/imatinib research relied on to identify 214 novel adverse events the registration trials missed. It's completely invisible to a monitoring tool pointed only at the major social platforms.

➡️ The common thread here: the signal is rarely where the obvious monitoring is pointed.

.jpg)

Why anonymity surfaces what clinical channels don't

There's another category of adverse event that formal reporting consistently misses. Not because patients don't experience it, but because they don't tell their doctors about it.

A 2024 comparative analysis of adverse event reports on Reddit versus FAERS for mental health drugs found significant differences in both the frequency and type of events reported on each platform

➡️ Reddit showed higher rates of sexual dysfunction and cognitive disorders, events that were comparatively rare in FAERS. The researchers attributed the disparity to Reddit's pseudonymous nature encouraging more candid discussion.

In more specific terms:

- For Lexapro, sexual dysfunction was the most commonly reported adverse event on Reddit – significantly more so than in FAERS

- For Zoloft, Reddit users reported higher rates of apathy, fatigue, and sexual dysfunction than those appearing in formal reports

- For Wellbutrin, anxiety, fatigue, and cognitive disorder topped the Reddit list – categories that appeared with different frequency in FAERS

None of this means FAERS is broken. It means voluntary clinical reporting has predictable blind spots. Patients don't disclose sexual dysfunction or cognitive fog to their doctor at anything close to the rate they experience it. But they will post about it anonymously to a subreddit where other people are going through the same thing.

How platforms shape what gets disclosed

Different platforms don't just attract different demographics, they produce fundamentally different disclosure behaviours. Research suggests that Facebook users are reluctant to discuss negative health topics due to the desire to present positive self-images. A patient who will describe antiretroviral side effects in detail on a disease forum may post a recovery update and workout photo on Instagram.

Data surrounding Ozempic is proof ⬇️

Research collecting semaglutide-related posts across Instagram, TikTok, X, Facebook, and YouTube found that the very common gastrointestinal problems during semaglutide therapy were not even mentioned on Instagram – despite being among the most frequently documented adverse effects of GLP-1 receptor agonists in clinical trials.

⚠️ A monitoring program relying primarily on Instagram for semaglutide surveillance would produce a safety profile that dramatically misrepresents the real-world patient experience of the most prescribed drug in its class.

The demographic dimension compounds this: approximately 69% of Instagram users and 70% of TikTok users are under 35, and almost exclusively young women posted their semaglutide experiences on these platforms. A diabetes drug's patient population skews much older, so a monitoring program built around those platforms is simultaneously under-representing the most common adverse effects and the demographic most relevant to the approved indication.

The risk around platform concentration

This is the social media equivalent of a concept well-understood in data infrastructure: source concentration risk. If the majority of your adverse event signal is coming from a single platform, your safety picture is weighted toward that platform's user behaviour, disclosure norms, and demographic profile. Not the actual patient population.

This is both a data quality issue and a compliance risk for PV teams. A monitoring program that systematically misses one demographic's adverse event experience, or one category of adverse event, is skewed in ways that could matter in a GVP inspection.

💡 This problem is more common (and more consequential) than most teams realise. Here's what incomplete data looks like in practice → Why your social media data is incomplete

The challenge: patients don't describe adverse events like clinicians do

Even with the right sources, the technical challenge of extracting pharmacovigilance-relevant information from social media is significant. Extracting drug-related adverse events accurately and efficiently from social media poses challenges in both natural language processing research and the pharmacovigilance domain.

Patients don't write "I experienced an adverse event." They write things like:

- "Three weeks on this and I can't think straight, is this the statin brain fog everyone talks about?"

- "Ozempic finger is real. My wedding ring doesn't fit anymore."

- "Since starting the new medication I've been absolutely exhausted, doctor says I'm fine but something's off."

The vocabulary gap between patient language and clinical terminology is the central technical challenge in this field. And it goes deeper than substituting lay terms for MedDRA codes.

A well-documented example from NLP research: a post containing "I'm just not sleepy tonight" was flagged by a named entity recognition tagger as containing a potential adverse event of "sleepy." The correct adverse event to capture was insomnia, but the negation wasn't detected.

Beyond language, social media produces significant volumes of irrelevant information that require sophisticated filtering. Unlike structured safety databases, social media lacks standardisation in adverse event reporting, and establishing a direct causal relationship between a drug and an adverse event is complex. Social posts often lack detailed patient history and medication context.

💡 So combining human expertise with NLP produces better results than either alone. Any NLP tool needs regular updating to keep pace with evolving patient slang, colloquialisms, and even emoji usage in health-related posts.

Why generic social listening tools aren't built for this

Standard social listening platforms are built to answer: what are people saying about our brand? They're optimised for volume, sentiment, and share of voice. They were not designed to:

❌ Distinguish "I felt dizzy after taking this drug" (potentially reportable) from "I get dizzy just hearing the price of this drug" (not reportable)

❌ Understand temporal relationships: "since starting X" vs "before I switched to Y"

❌ Detect negation accurately in medical contexts

❌ Recognise severity gradations or the evolving patient vocabulary for a specific therapeutic area

The typical response is to add more tools: one for general social, one for forums, one for app reviews, another for news. The result is three or four platforms running simultaneously, each with different data formats, different coverage gaps, different update frequencies, and no single audit trail. The operational complexity grows faster than the coverage does.

What this creates is exactly the wrong outcome: multiple disconnected sources, difficult to reconcile, impossible to present coherently to a GVP inspector, and still leaving significant parts of the patient conversation unmapped.

The alternative

The alternative is owning a well-specified data layer – supported by a provider with genuine multi-source coverage – and building your enrichment and triage workflow on top of it. That's the approach that simplifies operations and produces a defensible audit trail.

💡 If you're not familiar with the distinction between a social listening tool and a data infrastructure layer, this is worth reading first → What is headless social listening

5 steps to a compliant monitoring workflow

For teams building or auditing their social media adverse event monitoring programs, here's what a compliant end-to-end workflow looks like in practice. ⬇️

Step 1: Source selection and data collection

Define which platforms and communities are relevant to your therapeutic area and patient population. This requires genuine research into where your specific patients congregate online.

For most indications, this means going well beyond a single major platform to include:

- Disease-specific subreddits and condition-focused forums

- Patient advocacy sites and rare disease community platforms

- App store reviews for companion devices or digital therapeutics

- YouTube comment sections on treatment-related content

- Publicly accessible Facebook groups and health communities

The data must be pre-login, publicly available only. No acceess to private groups, protected health information, or authenticated content.

👀 Not sure where the legal lines sit? Read: Is web scraping legal? What you need to know in 2026 →

Step 2: Signal enrichment

Apply medical-context enrichment to the raw data stream to flag text containing language patterns associated with adverse event reporting:

- Symptoms mentioned in relation to a named product or drug class

- Temporal relationships ("after starting," "since switching to," "three weeks in")

- Severity indicators and emotional framing around health experiences

- Drug name variations, brand names, generic names, and common misspellings

The output is a prioritised queue of content for human review, not a list of confirmed adverse events.

Step 3: Human pharmacovigilance review

This is the step that cannot be automated or delegated to infrastructure. Qualified PV professionals assess each flagged item against the minimum ICSR criteria under GVP Module VI:

- At least one identifiable reporter

- At least one identifiable patient

- At least one suspect adverse reaction

- At least one suspect medicinal product

Even with well-designed enrichment, the human review layer is non-negotiable. As IQVIA's pharmacovigilance specialists have noted, the ideal of comprehensive automated adverse event identification without manual review is not yet a reality. Technology handles scale and triage; experts handle decisions.

Step 4: Regulatory reporting

Cases that meet ICSR criteria are handled via established regulatory frameworks and timelines. For serious, unexpected reactions, the 15-day clock applies from the moment of awareness – including awareness via a social media post seen by any employee.

Documentation of the decision-making process is as important as the submission itself. In a GVP inspection, you need to demonstrate not just that you monitored, but how you assessed each flagged case and why you made the reporting decisions you did.

Step 5: Continuous monitoring and vocabulary updating

This is not a one-time setup. Three things change continuously:

- Patient language evolves: new slang, new drug nicknames, new community-specific shorthand

- New platforms emerge and patient communities migrate

- Off-label use creates new patient populations with different adverse effect profiles

The sources, enrichment parameters, and monitoring configuration all need ongoing maintenance. A program that was well-calibrated 12 months ago may be missing significant signal today.

How to evaluate your current social data setup

Not every team needs the same solution, and the appropriate scope of social media monitoring depends on the therapeutic area, the product lifecycle stage, and the patient population. But these three questions apply broadly.

Where are your patients talking?

This is therapeutic area-specific and demographic-specific. Patients managing a rare cancer on long-term treatment are on different platforms than patients newly prescribed a GLP-1 agonist, who are on different platforms than patients managing treatment-resistant depression. Source selection requires genuine knowledge of your patient community – not a default assumption that Twitter and Facebook cover the population.

What percentage of your monitoring signal comes from one platform?

If more than half your social adverse event data is coming from a single source, your monitoring program has the same structural vulnerability as any over-concentrated dataset: it's weighted toward that platform's user behaviour, disclosure norms, and demographics – not the actual patient population. This is both a data quality issue and, increasingly, a regulatory one. ⚠️

Can you demonstrate the audit trail?

In a GVP inspection, you will need to demonstrate:

- Which sources were included in monitoring and why

- What methodology was used for enrichment and triage

- The provenance and collection methodology of the data itself

- The decisions made about each identified potential case, and the rationale

Documentation of the data infrastructure is as important as the data. A compliance-ready social monitoring program needs to be able to demonstrate to an ethics committee, data protection officer, or regulatory inspector exactly where the data came from, how it was collected, and how it was processed.

What to look for in a data infrastructure provider

- What sources are actually covered – not just listed, but with verifiable collection methodology and coverage metrics?

- How is the enrichment layer built, and how is it kept current with evolving patient vocabulary in your specific therapeutic areas?

- Is the data pre-login and publicly available only, with no exposure to private or protected health information?

- What compliance documentation and audit trail is provided that would satisfy a GVP inspection or ethics committee review?

- Does the provider clearly distinguish between data infrastructure and pharmacovigilance services – and does it decline to perform the latter?

⚒️ Of course, there's the question of whether to build that infrastructure internally or work with a specialist provider. Here's how to think through that decision →

Key takeaways

- Patients have been reporting drug experiences online since the 1990s. The scale is now millions of posts across hundreds of communities, in real time, globally.

- The regulatory obligation to monitor is already codified in EU GVP Module VI and growing in the US

- Structured social monitoring can surface safety signals years before conventional reporting. But only when using the right sources, not general social platforms.

- Platform selection is the variable that determines whether your program adds value.

- Enrichment and triage matter as much as coverage – and every adverse event classification and reporting decision must sit with a qualified PV professional.

- The question isn't whether to monitor. It's whether your current program is listening where your patients actually speak.

Not sure whether your current social data coverage actually reaches the communities your patients use? Talk to the Datashake team →

More insights you might like