The Most Impactful Use Cases for Social Data in 2026

Over 5.4 billion people use social media. The conversations happening across those platforms are the largest unfiltered corpus of human opinion ever created: candid, specific, real-time, and publicly available.

Yet so many organisations are still using it to count brand mentions.

Here's what you can actually do with social data in 2026: the organisations generating real value from it aren't running social listening. They're training AI models. Detecting drug safety signals. Identifying product gaps competitors haven't shipped yet.

The teams still focused on dashboards and sentiment scores are sitting on a gold mine and panning for rocks.

(If the shift away from closed social listening tools is new territory for you, our article on headless social listening explains the model that makes it possible.)

The problem is that a dataset good enough for brand monitoring is often the wrong dataset for AI training. What works for crisis detection doesn't necessarily work for pharmacovigilance. One size doesn't fit all, and pretending it does leads to insights built on the wrong foundation.

Here are the five use cases where social data is creating the most impact in 2026, what each one actually requires from a data perspective, and how to evaluate whether a provider can genuinely deliver it. ⬇️

Who this is for:

- Data and analytics leaders evaluating social data infrastructure

- Product and R&D teams using social data for research and development

- Sales and competitive intelligence professionals

- HR and talent acquisition teams tracking employer reputation

- Anyone responsible for making strategic decisions from social data

What you'll learn:

- Why the same dataset can't serve every use case, and what to use instead

- Which five use cases are generating the most value from social data in 2026

- Why single-source datasets produce systematically biased outputs

- How to evaluate whether your current data coverage is fit for purpose

- What questions to ask providers that most can't answer cleanly

1. AI Model Training and Fine-Tuning

Every company building AI-powered products needs training data. The models driving sentiment analysis, intent detection, customer intelligence, and conversational AI are only as good as what they were trained on.

For a decade, the default source was the open web: scraped articles, Wikipedia, forum threads, public social content. That well is running dry. ⬇️

Epoch AI estimates the stock of quality human-generated public text for AI training sits at around 300 trillion tokens – and at current training rates, could be fully utilised between 2026 and 2032. OpenAI researchers working on GPT-4.5 noted that a shortage of fresh data was a greater constraint than a lack of computing power.

This means the data that made large language models useful is increasingly contested, restricted, and exhausted.

.jpg)

The shift to curated social data

The teams that are getting ahead of this are building fine-tuned models on curated social data (conversation threads, review corpora, forum discussions) that reflect how real people talk about specific topics, industries, and products. Not what was written about those things. What was said about them, unprompted, by people who had an actual experience or opinion.

Why the source of training data fundamentally changes the model

The difference is huge.

An LLM trained on press releases and Wikipedia articles learns how journalists describe a pharmaceutical product. A model trained on patient forums learns how patients describe taking it – the side effects they mention, the language they use when something goes wrong, the way frustration and relief sound in unstructured text.

For any company building health, CX, or consumer intelligence AI, those are fundamentally different models that produce fundamentally different outputs.

Forrester projects that by mid-2026, 40% of enterprises will use LLMs to query third-party social data. The companies building those LLMs need the data first.

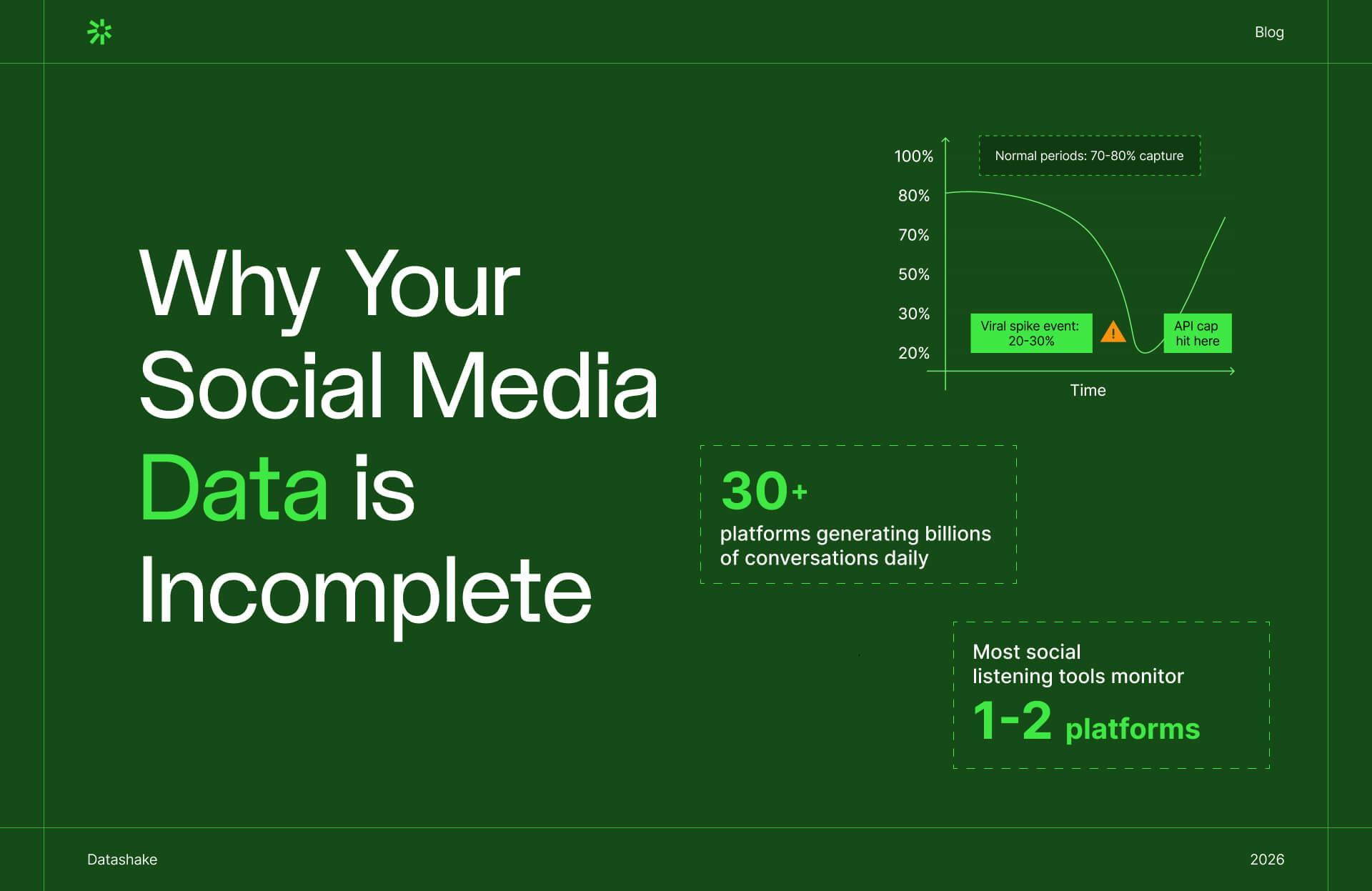

The 50% rule

The biggest risk in AI training isn't volume, it's representativeness. And the representativeness problem runs deeper than most teams realise.

%20V2.jpg)

There are two layers of bias embedded in any social dataset.

1️⃣ The first is platform-level: every major platform has a distinct demographic profile. Twitter/X has become the most male-dominated major platform, while Instagram, Snapchat, and TikTok consistently overrepresent women. Facebook and YouTube come closest to matching population averages. A model trained predominantly on one platform inherits that platform's demographic skew.

2️⃣ The second is participation-level. Even within a platform, the 90-9-1 rule holds: roughly 90% of users lurk without contributing, 9% contribute occasionally, and 1% produce the vast majority of content. That means the "social data" from any single platform is largely the output of a small, vocal, highly active minority – which is demographically distinct from the broader user base. Research confirms that sentiment expressed by high-frequency users differs measurably from that of low-frequency users on the same platform.

Put both layers together and you have a dataset that reflects neither the population nor the platform's users – but the most vocal segment of the most represented platform in your training corpus.

How the 50% rule applies

If any single source accounts for more than 50% of your training data, your model has a bias problem you haven't named yet. The model performs well in demos and poorly in production, and the root cause is invisible unless you know where to look.

Effective AI training needs genuine linguistic diversity – different platforms, communities, demographics, and geographies – so the model learns to handle the full range of how humans actually communicate.

💡 We've put together a full framework for evaluating whether your social data is actually representative if you want to stress-test your current setup against these criteria.

What AI model training and fine-tuning requires from your data

Practically, this means you need:

- High-volume, multi-source data – not just mainstream platforms, and not dominated by any single one

- Full conversation threads with context and metadata intact, not just top-level posts

- Regional and multilingual coverage for models that need to work across geographies

- Source transparency – a provider who can tell you exactly what percentage of your dataset comes from each source type, not just a total source count

2. Crisis Intelligence

87% of executives now rank reputation risk above other strategic risks, according to Deloitte. Organisations with established response protocols recover from reputation damage up to three times faster than those without.

And the speed at which reputational damage travels has compressed dramatically. The Astronomer/Coldplay Kiss Cam incident in 2025 went from a clip at a concert to global news within hours, with internet users identifying the executives involved and framing the corporate governance narrative before the company had issued a single statement.

Crises don't start where you're watching

.jpg)

Crises rarely originate where organisations are looking. They start in places no algorithm is prioritising and no monitoring dashboard is watching: a thread in a niche subreddit, a comment on a forum with 400 members, a video posted by someone with no audience.

In fact, almost every major reputational event of the last fifteen years has proven this. ⬇️

👀 In 2009, an obscure Canadian musician named Dave Carroll posted a YouTube video about United Airlines breaking his guitar. He had no following. Within a single day it had 150,000 views; within weeks it had reached millions, with United Airlines reportedly losing $180 million in shareholder value. United's monitoring tools caught it on Twitter less than 24 hours after posting, but by then the story had already escaped.

More recently, the "Did Michael Cera create CeraVe?" conspiracy theory started as a joke in a small subreddit. It bubbled for years (with an early thread traced to r/stupidquestions) before being picked up by 450 influencers and generating 15.4 billion earned impressions, eventually becoming the hook for CeraVe's Super Bowl campaign. That one ended well, but most don't.

The underlying dynamic is structural: viral conversations typically start in subreddits, get upvoted and shared within the platform, then migrate to TikTok, Instagram, and eventually mainstream media.

➡️ By the time a story is trending on a high-volume platform, it has already been forming somewhere else for days or weeks – building momentum, accumulating detail, acquiring the framing that will stick.

Monitoring only one or two platforms catches the fire after it's already burning. Deep crisis intelligence means coverage across the full surface area of where conversations about your brand, industry, and stakeholders actually happen. That includes the places that are inconvenient to monitor, the communities too small to appear on any dashboard, and the users nobody has ever heard of.

💡 We've covered why these gaps exist structurally, and which sources are most commonly missing, if you want to find out more.

Why baseline data changes the game

Speed alone isn't enough. Without historical depth, you can't distinguish a genuine emerging signal from normal noise. A five-fold increase in mentions of a product complaint looks alarming in isolation. Against five years of baseline data, you can determine whether it represents a genuine anomaly or a seasonal pattern you've seen before.

➡️ Alerts tell you something happened. Intelligence tells you whether it matters and how fast it's moving.

What this use case requires from your data

Speed and breadth, in that order. You need coverage across:

- Review platforms – where product and service complaints concentrate first

- Employer review sites – where internal sentiment surfaces publicly before it becomes media stories

- Local and regional forums – where issues frequently originate before reaching national conversation

- Health and condition-specific platforms – essential for any regulated-sector brand

- Niche industry communities – where practitioner dissatisfaction first emerges

The question to ask any provider: can you show me demonstrated coverage across niche and vertical sources? And what is your actual data latency – measured from when a post appears to when it's available in your system, not a headline figure?

3. Reputation Management

Crisis detection is reactive by nature – you're watching for something to go wrong. Reputation intelligence is the longer-term, structural version of the same problem: understanding how perception of your brand, your category, and your competitors shifts over months and years, and why.

The difference between a signal and a trend

➡️ A spike in negative mentions is a signal. A six-month drift in the language customers use to describe your product – moving from "reliable" toward "slow" or "clunky" – is a trend.

Signals get caught by monitoring tools. Trends only become visible when you have enough historical depth to see the direction of travel.

But most organisations have neither the archive depth nor the cross-source coverage to track reputational trends properly. They're measuring brand sentiment on the platforms they already manage, against a baseline of the last thirty days.

What reputation intelligence makes possible

Done properly, long-run reputational tracking surfaces things that no individual signal would reveal:

- Category perception shifts – is the way your market thinks about your product type changing? Are the words customers use to describe the problem you solve evolving?

- Competitor perception gaps – not just what customers say about a competitor, but how that sentiment has moved relative to yours over twelve months

- Emerging narrative risks – themes that appear repeatedly across disconnected communities before they coalesce into a public story

- Share of voice over time – not as a vanity metric, but as a proxy for whether your brand is becoming more or less central to the conversations that matter in your category

Spotlight: Employer reputation management

.jpg)

The most underserved application of long-run reputation tracking is employer reputation, where social data has the clearest edge over any other research method.

HR and talent acquisition teams have always cared about how the organisation is perceived by candidates. But most of what they're working with is lagging, incomplete, or both: annual engagement surveys, Glassdoor scores checked occasionally, anecdotal feedback from recruiters. None of it tells them how perception is actually moving, or why, or how they compare to competitors in the talent market right now. ⚠️

Social data changes that. The conversations that shape employer reputation:

- How employees describe their experience

- How candidates discuss a company's culture before accepting an offer

- How former staff frame their departure

- What people in a given industry say about which employers are worth joining

➡️ They’re all happening publicly, continuously, and across a range of sources that most organisations aren't watching at all.

That includes Glassdoor and LinkedIn, but it doesn't stop there.

- Industry-specific forums

- Reddit communities organised around professions and sectors

- X threads where practitioners discuss workplace experiences

- Local job boards with review functions

This is where employer reputation actually lives, in language far more candid than anything that surfaces in a structured survey.

The signals this data surfaces are directly useful to both HR and senior management:

- Compensation and benefits perception – are candidates in your target talent pool flagging your packages as competitive, or quietly steering each other elsewhere?

- Culture and management signals – recurring themes in employee reviews and forum discussions often surface leadership or team-level issues months before they affect retention metrics

- Competitor employer positioning – how are your direct competitors perceived as employers in the same talent pools you're recruiting from? Where are their gaps?

- Narrative drift over time – is the language people use to describe working at your company shifting? "Fast-paced" becoming "chaotic", "collaborative" becoming "political": these are early indicators, not lagging ones

- Candidate decision signals – forum discussions among job seekers in your industry reveal what factors are actually driving offer acceptance and rejection, not what candidates tell recruiters

What reputation management requires from your data

Depth over speed. Archive access is the entire point: you're tracking how perception evolves, not just what's happening right now.

- Multi-year historical data – meaningful trend analysis needs at least twelve months; three to five years is where the real patterns emerge

- Consistent source coverage – trend data is only reliable if the sources being monitored don't change; gaps or additions mid-timeline skew the trend

- Cross-source aggregation – employer reputation lives across Glassdoor, LinkedIn, professional forums, and social platforms simultaneously; single-source tracking gives you one dimension of a multi-dimensional picture

- Comparable baselines – the ability to query historical data at speed, so you can benchmark current perception against any prior period without waiting for a report to be run

4. Digital Customer Journey Analytics

The majority of organisations tend to map the customer journey from the inside out: trace the steps a customer takes through their own touchpoints (ad, landing page, trial, onboarding, renewal). But what is rarely captured is what's happening in between those touchpoints.

➡️ The research conversation before the first visit. The forum thread consulted before the buying decision. The community discussion that happens after a feature update lands.

Social data is the only source that fills those gaps. It captures the customer journey as the customer actually experiences it, not as the CRM records it.

.jpg)

Listening at every stage, not just the ones you own

The buying and consumption journey for most B2B and B2C products now runs across a dozen sources before a decision is made. A potential buyer might encounter your category through a LinkedIn post, research it in a professional forum, compare options on a review platform, check a subreddit for unfiltered takes, and only then visit your website. Your analytics stack sees the last step. Social data captures the whole path.

The same logic applies post-purchase. Product teams want to know how customers are actually using a feature. Not how they said they'd use it in the onboarding survey, but what they're asking about in community threads two months in, what workarounds are circulating in user forums, what complaints cluster together before they surface in support tickets.

Each stage of the journey produces a different kind of signal, in a different place:

- Awareness and category formation – how prospects describe the probleem your product solves, in their own language, before they've encountered your brand. This is where positioning gaps and messaging opportunities live

- Consideration and comparison – the direct product comparisons, feature debates, and vendor switching discussions happening on review platforms, Q&A sites, and professional communities. When a cluster of competitor reviews centres on the same friction point, that's a positioning opportunity visible months before any analyst report names it

- Decision signals – forum threads where buyers describe what tipped a decision, what objection almost killed the deal, what they wish they'd known before signing. Sales teams who read these conversations can run a different kind of discovery call

- Onboarding and adoption – the workarounds, feature requests, and confusion points that surface in user communities and support forums shortly after purchase. Product teams tracking these signals have already started the next roadmap conversation before the QBR

- Retention and expansion – the language drift that precedes churn. When customers who were enthusiastic advocates start describing your product differently (more qualified, more hedged, more comparative) that shift is visible in the data before it shows up in NRR

What this use case requires from your data

Depth over speed. For journey analytics, latency matters far less than completeness. You want full conversation threads, not just original posts – because the value is often in the reply chain, where users add context, challenge claims, or reveal the specific circumstances that drove a decision.

Source coverage needs to map to where your customers actually are at each stage of their journey:

- Review platforms – for consideration-stage comparisons and post-purchase sentiment

- Professional and industry forums – for practitioner-level discussion at every stage

- Q&A platforms – for feature requests, workarounds, and switching signals

- E-commerce reviews – for price sensitivity and competitive substitution patterns

- Employer review sites – for strategic signals about how organisations are evaluating and using tools internally

- Social platforms and communities – for awareness-stage language and cultural signals that precede formal buying behaviour

Historical data is non-negotiable. Journey analytics only reveal their full value over time. You need to track how conversations evolve across the buying cycle, how sentiment shifts between stages, how a competitor's customer perception six months ago compares to today. A provider without deep archive access gives you a snapshot of one stage. You need the full timeline.

5. Regulated Industry Intelligence: Pharma and Financial Services

This is where the stakes are highest, and where a gap in your data coverage stops being an analytical problem and starts being a compliance one.

Pharma: social data as pharmacovigilance infrastructure

In pharmaceutical and life sciences, social data is increasingly embedded in pharmacovigilance infrastructure.

NLP analysis helps companies categorise and prioritise adverse event reports. And sentiment analysis applied to patient communities enables brands to track how patients are actually experiencing medications – the emotional context, the language patterns, the signals that precede formal reporting.

Why social data catches what formal reporting misses

A major scoping review of 60 studies found social media data to be particularly valuable in identifying new or unexpected adverse events in a timelier manner than traditional spontaneous reporting systems.

Traditional pharmacovigilance relies on formal reporting to regulatory agencies – a process well-documented for significant underreporting and time delays. One study comparing Reddit adverse event reports with the FDA's FAERS database found notably higher reports of certain adverse events on social forums than in official filings, particularly for events patients found socially sensitive to report formally.

This isn't brand monitoring, it's safety surveillance. And the sources you have access to are critical: adverse event signals almost never appear first on mainstream social platforms. They appear in patient forums, condition-specific communities, caregiver support groups, and health-focused platforms.

Financial services: the same logic, different signals

In financial services, the use case looks different but the underlying logic is the same: social data provides context that structured financial data doesn't.

➡️ Know Your Customer due diligence, entity research, and market risk monitoring all benefit from understanding what's being said publicly about companies, individuals, and industries.

Sentiment shifts on professional forums can precede formal disclosures. Conversations in industry communities can surface concerns before they become formal investigations.

What this use case requires from your data

Completeness above everything else. Missing data in these contexts is a gap in a compliance function.

For pharma, the essential sources are:

- Patient communities and condition-specific forums

- Caregiver and support platforms

- Health-focused discussion boards

- Regional and non-English-language sources – adverse events often surface first in non-English-speaking communities

For financial services, the priority sources are:

- Professional networks and industry forums

- Public discussion boards where market participants share context outside formal filings

- Employer review platforms for signals about organisational health

The additional requirement: methodology transparency

In both sectors, you need to know exactly how your provider accesses data, what happens to that access when platforms change their policies, and how completeness is verified. A provider who can't show you their source breakdown with specificity is not a safe choice in a regulated context – regardless of what their headline coverage numbers say.

💡 Read our blog about whether web scraping is legal in 2026.

6. R&D and Sales Intelligence

Social data is often framed as a marketing tool. But the teams that are getting a huge amount of value from it in 2026 are R&D and sales.

Both functions are asking the same underlying question from different angles: what do customers actually need, and where are the gaps the market isn't filling yet?

The difference is that R&D is asking it to shape what gets built, and sales is asking it to shape how existing products get positioned and sold. Social data answers both, if the dataset is the right one, of course.

R&D: hearing what customers say when nobody's asking

Formal feedback channels capture what customers are willing to say to a company representative, in a structured format, at a moment the company controls. That's useful, but it’s a very edited version of what customers actually think.

The unedited version lives elsewhere. ⬇️

In the forum thread posted at 11pm by someone who hit a wall with your product and wanted to vent to people who'd understand. In the subreddit where users share workarounds for features that don't exist yet. In the patient community where people describe a medication's real-world effects in language no clinical trial captures.

For R&D, the value is in listening across the entire stakeholder landscape, at every stage of the consumption journey:

- Unmet needs before anyone has named them – how prospects describe the problem they're trying to solve, before they've encountered your product or your category's standard vocabulary. This is where genuinely new product ideas come from

- Adoption friction in real time – the workarounds, confusion points, and missing-feature requests that appear in user communities weeks after launch. Roadmap inputs that arrive long before formal feedback cycles catch up

- Perception drift over time – how the language around your product shifts. Enthusiasm that becomes qualified. Features mentioned less. Competitor names appearing in contexts where they didn't before. These are early signals, not lagging ones

- The wider stakeholder picture – what practitioners, commentators, and adjacent industry voices are saying about your category. R&D strategy that only listens to direct customers misses the forces reshaping the market around them

Over 5.4 billion people use social media globally, but they are not evenly distributed across platforms or topic conversations.

Research confirms that social media users systematically oversample more privileged, educated, and socioeconomically advantaged groups. And within any given platform, the 90-9-1 rule means the visible conversation is shaped overwhelmingly by a tiny minority of highly vocal users – whose opinions, by definition, are not representative of the broader user population.

So you get a dataset that appears to represent consumers but actually represents a vocal, online-engaged subset of them. Insights built on it can feel credible, look credible, and be systematically wrong.

Sales: knowing what the market knows before it says it out loud

The best-informed sales teams aren't just better briefed on their own product. They understand the competitive landscape and customer behaviour in ways that change how they show up in conversations.

Three things social data makes possible that weren't before:

- Spotting market gaps before they become obvious. When the same unmet need surfaces repeatedly across forums, review platforms, and community threads. A feature that doesn't exist, a problem every current solution handles badly, a use case the whole category keeps ignoring – that's a pattern. A sales team that can name that pattern in a discovery call, before the prospect does, is running a different kind of conversation.

- Reading competitor vulnerability in real time. The most actionable competitive signals appear in what a competitor's customers are saying to each other, in places those customers don't expect anyone commercial to be watching. Sales teams tracking these signals can anticipate objections before they're raised and time outreach to moments of genuine competitor weakness.

- Understanding what actually drives buying decisions. Forum discussions among buyers in your category reveal the real evaluation criteria – not what buyers tell sales reps, but what they tell each other. What almost killed the deal. What made one vendor feel trustworthy and another feel slick. What the stated criteria were versus the ones that actually mattered.

What both use cases need from the data

Representativeness over volume. The question isn't how much data, it's whether the data reflects the population you're drawing conclusions about. A large dataset from the wrong sources produces confident, wrong answers.

The source mix should map to where your customers, your competitors' customers, and the practitioners in your space actually talk:

- E-commerce reviews – verified purchase sentiment, closest to real behaviour

- Category forums – detailed, high-engagement discussion from people who care enough to write at length

- Professional communities – practitioner signals that tend to precede formal market shifts

- Regional and local platforms – market-level variation that national datasets flatten out

- Broader community platforms – cultural context and early-stage signals before a need has a name

Metadata is half the signal. Verified purchaser status, engagement depth, author context, timestamps – these are what separate a genuine pattern from three vocal users dominating a thread. Without them, a spike looks like a trend. With them, you can tell the difference.

How to Evaluate Your Social Data Provider

Every one of these use cases has a different data signature – a specific combination of source types, latency requirements, depth characteristics, and quality standards. The mistake isn't using social data. It's applying the same general-purpose dataset to every problem without asking whether it was designed for yours.

Most providers get evaluated on UI, pricing tiers, and coverage headline numbers. None of that tells you whether the data is fit for your use case.

Before signing anything, define what you actually need, then ask five questions:

1. Show me your source breakdown. What percentage of data comes from each source type? If any single source accounts for more than 50%, your insights (and any AI built on top of them) are shaped by that platform's demographics, not your market's.

2. What is your actual data latency, for my specific sources? Not an average. Measured from when a post appears to when it's available in your system, for the exact sources your use case depends on. For crisis detection, hours matter. For competitive intelligence, they often don't.

3. How do you access data, and what happens when a platform changes its terms? Providers entirely dependent on official APIs face fragility every time a platform changes its policies. Diversified access architecture is more resilient – and a provider who answers this question vaguely is telling you something important.

4. Do you capture full conversation context, or just original posts? For AI training, competitive intelligence, and pharmacovigilance, the reply threads, engagement metadata, and author context often carry more signal than the original content. Providers who only deliver top-level posts are delivering half the data.

5. Can you verify coverage for the specific sources my use case requires? "150+ sources" doesn't tell you whether those sources include the patient communities, professional forums, or regional platforms that matter for your problem. Ask for demonstrated, specific coverage in your domain, not a total count.

If you're weighing whether to build your own data pipeline rather than buy access to one, this post works through the full cost-benefit analysis – including where the hidden costs of building tend to surface.

Final Thoughts

Social data isn't a category. It's a raw material, and like any raw material, what matters is whether it's the right grade for the job.

The teams generating real value from it in 2026 have:

1️⃣ Defined what they needed

2️⃣ Asked the right questions before signing a contract

3️⃣ Treated the data layer as infrastructure, not a line item.

The use case comes first, the data requirements follow. Everything else is downstream of that.

See what the right data layer for your use case looks like

Find out if your current data coverage is fit for purpose. Datashake provides access to 150+ sources through a single API – with the coverage depth, archive history, and source transparency that serious use cases demand.

Book a call with the Datashake team →

More insights you might like