How to Map The Full Customer Voice by Combining Social and Review Data

Social data and review data are almost always managed by different teams, analysed in different tools, and treated as separate workstreams.

Your customers are out there telling you exactly what they think. The problem is they're saying different things in different places, and your team is probably only listening to half of it.

This guide explains what each data type captures, where each one falls short on its own, and how combining them produces a more complete and accurate picture of customer opinion.

What is the difference between social data and review data?

1️⃣ Social data is the umbrella term for all publicly available, user-generated content across the internet: social media posts, online reviews, forum threads, news comments, and more. In that sense, review data is a subset of social data, not a separate category.

Social media data comes from platforms like X, Reddit, Instagram, and LinkedIn – real-time, unprompted, directed at a person's own network.

2️⃣ Review data is structured jfeedback written by customers after experiencing a product or service, posted to dedicated review platforms like Google, G2, Trustpilot, or TripAdvisor.

Review data comes from dedicated review platforms like Google, G2, Trustpilot, or TripAdvisor – deliberate, post-experience, written for future buyers.

The core differences are timing, intent, and scope.

Review data is focused by definition. It's an opinion about a product or experience, written with a specific purpose: to inform someone else's decision. The subject matter is bounded. The audience is future buyers.

Social media data is broader. It can be product or service feedback, but it can just as easily be an opinion on a trend, a reaction to a news story, or someone simply expressing who they are. The subject is whatever the person felt like saying. The audience is their network.

That breadth is social media data's strength and its challenge. It captures far more of the world, but requires more work to isolate the signal that's relevant to you.

That's why social data is stronger for spotting trends early, detecting brand issues as they form, and understanding what customers are talking about before and during the buying process. And why review data is stronger for product-level intelligence, understanding why customers stay or leave, and capturing the language buyers actually use when they're justifying a decision.

Why neither source alone gives you the full picture

McKinsey's State of the Consumer 2025 found that consumers rate social media as their least trusted source when making purchase decisions. Yet Sprout Social's 2025 Index found that 81% of the same consumers made spontaneous purchases multiple times a year because of social media.

➡️ Social and review data serve entirely different cognitive functions. Social shapes how someone feels about something before they've consciously decided anything. Reviews are what they reach for when they want to validate a decision they've already started leaning into.

What does social data capture?

Social data records what people say when no one asked them – to their community, in the moment, without filtering for an audience of future buyers.

What social data is uniquely good at

- Emerging trends. Social surfaces what's gaining traction before it solidifies anywhere else. A rising cluster of comparison posts on Reddit can signal that a product category is entering active evaluation for a new audience, often weeks before review volumes shift.

- Pre-purchase signals. Someone posting frustration about a workflow problem hasn't found a solution yet. That's awareness-stage intelligence, in the wild.

- Early warning on brand problems. A complaint pattern building on X can signal a retention problem that NPS surveys haven't caught yet. Brandwatch notes that a cluster of onboarding complaints could indicate an issue that structured feedback channels haven't surfaced, often because customers stop completing surveys before they write the post.

- Category language and associations. The comparisons people make, the adjacent topics they mention, the language they use when they're not talking to you – this cultural context doesn't come from any other source.

- Opinion leaders, who shape the conversations about topics & opinions

Where social data falls short

ScienceDirect flags that noise, spam, low-quality data, and false positives are persistent challenges with social data at scale. Algorithms surface emotional extremes, not representative opinion. A viral thread is not widespread opinion.

And critically: social data tells you something went wrong, but rarely which specific feature, for which customer segment, in which context.

What does review data capture?

A review is written after the experience is complete. The reviewer has formed a settled view and chosen to put it on record for future buyers. That produces wholly different data.

What review data is uniquely good at

- Product-specific detail. Reviews are longer-form and follow cleaner syntax than social posts, which makes them structurally extractable for product intelligence in a way social data rarely is.

- Source credibility. On G2, Capterra, and TrustRadius, reviewers are verified users. The opinion is from someone who actually used the product, not a competitor, a bot, or a secondhand account.

- Durability. A review written 18 months ago is still the first thing a prospect reads today. Social posts are algorithmically buried within hours. Review data compounds over time.

- Churn indicators. A declining star rating on G2 or Trustpilot is often an early signal of customer loss. Writing a formal review requires deliberate effort, it signals a level of considered dissatisfaction that a frustrated tweet usually doesn't.

- Category and trend signals. Aggregate review data across a product category reveals which segments are growing, which are declining, and where customer expectations are shifting. Star rating trends at category level show you which types of solutions buyers are increasingly satisfied or increasingly frustrated with. That's a different kind of intelligence from individual product reviews, and one that's hard to get from social media data alone.

Where review data falls short

Review data is always backward-looking. A product issue developing this week won't appear in reviews for weeks. New trends, live competitive conversations, how a launch is landing right now – none of that is in review data yet.

The sample is skewed too. Most customers never leave a review, as people tend to write them when they're angry or when the experience was genuinely exceptional. The majority in the middle, who used the product, found it acceptable, and moved on, are mostly silent. Review data over-represents the extremes.

Then there's the problem with fake reviews. On e-commerce platforms and review sites where ratings directly affect visibility and sales, the incentive to buy positive reviews (or to commission negative ones against competitors) is significant and well-documented. It's not a reason to dismiss review data, but it is a reason to treat platforms with strong verification differently from those without it. A verified review from a confirmed user on a B2B platform is a different kind of signal from an anonymous rating on a marketplace where the seller's ranking depends on the score.

💡 Many social data pipelines are missing significant portions of the conversation, because of how data is collected and what sources are included. Read about it here

Social data vs review data: a comparison

💡 Before combining social and review data, it's worth asking whether your social data accurately represents the audience you're trying to understand. This framework for evaluating data coverage covers the key questions.

How do social and review data fit into the customer journey?

Awareness

Social leads. People articulate problems before they have solutions, and brand discovery happens through conversation and cultural association. Brandwatch describes social data as showing "which topics and pain points drive initial interest": the exact intelligence the awareness stage needs.

Consideration

Both data types are doing meaningful work here, just differently.

47% of shoppers rate user reviews as the most influential content when evaluating options – compared to 11% for brand-produced social content. Reviews anchor the rational, comparison-stage decision: what do verified users actually think?

Social media data shapes consideration through a different channel. Influencers and opinion leaders demonstrate products in real use – answering questions reviews rarely address, like how something actually looks, feels, or fits into a workflow.

UGC from everyday customers adds a layer of lived experience: tutorials, unboxings, real-world context that gives a product credibility beyond a written verdict. Neither replaces the other. Reviews tell you what people concluded. Social media data shows you what using it looks like.

Reddit comparison threads sit in the middle: social data that behaves like review intelligence, where the evaluation is happening collectively and in public.

Decision

Reviews are close to definitive. 92% of consumers trust reviews more than advertising, and 41% read 4–7 reviews before purchasing. The final validation before committing is almost always review-led.

Post-purchase

Both matter differently. Review data reflects settled dissatisfaction: declining star ratings are a churn indicator, usually with a 2–4 week lead time. Social reflects the immediate reaction: complaints, advocacy, and recovery opportunities in real time.

Advocacy

Social drives reach – organic sharing spreads in ways a review never will. Review data creates the durable, searchable record that shapes the next buyer's decision.

A Springer study published in 2025, drawing on 342 research articles, found that both review and social data influence customer behaviour across all journey stages, but through different mechanisms at each stage.

What does it mean when social and review sentiment diverge?

The gap between social and review sentiment is itself an underused signal. Comparing social sentiment against structured feedback "can reveal the conversations that impacted your score", and cross-referencing review data can narrow down the root cause.

There are three patterns worth knowing ⬇️

- Social goes negative, reviews hold steady. Usually a moment rather than a trend: a campaign that landed badly, a news cycle, a customer service incident that spread. Respond to the situation; it likely doesn't reflect a structural product problem.

- Reviews decline, social looks neutral. This is the one to watch. Customers who are quietly deciding to leave often don't vent publicly, they just go. Their dissatisfaction shows up in reviews before it shows up in churn data, because writing a considered review is something people do when they've decided they're done. Social looks fine because the conversation has gone private.

- Both declining simultaneously. Something structural. The product, the experience, or the positioning has a real problem that has settled into customer opinion rather than just spiking in reaction to an event.

How to use combined social and review data in practice

Product roadmap prioritisation

Social surfaces early-stage feature requests in casual language. Reviews surface the same requests with specific, repeatable language that makes prioritisation defensible. When a theme appears in both, it's a signal worth acting on.

Competitive intelligence

Social shows what customers are saying about competitors right now. Review data on G2 and TrustRadius shows why buyers chose or left those competitors – with verified, detailed reasoning. Sprinklr reports that companies using social listening for competitive intelligence adapt to market shifts roughly 20% faster.

Messaging and copy

Reviews contain the language customers use to justify their choices to other people. HubSpot research found that brands using customer-derived phrasing in early-funnel content saw around 28% better engagement. Social shows whether that language is resonating once it's deployed.

Churn prediction

An account showing declining review scores on B2B platforms alongside increasing negative social mentions is a meaningfully different risk profile than one showing only one of those signals. The combination distinguishes genuine churn risk from noise.

Brand health monitoring

Social is the early indicator – fast-moving, reflective of the current moment. Reviews are the slower-moving structural signal, less reactive but more reliable over time. Reading both gives you a current pulse and a longer-term trend.

ICP accuracy

The people talking about your category on social and the people buying your product are often different populations. Where those audiences diverge (in language, in platform, in the problems they emphasise) there's usually a gap between your marketing assumptions and your buyers' reality.

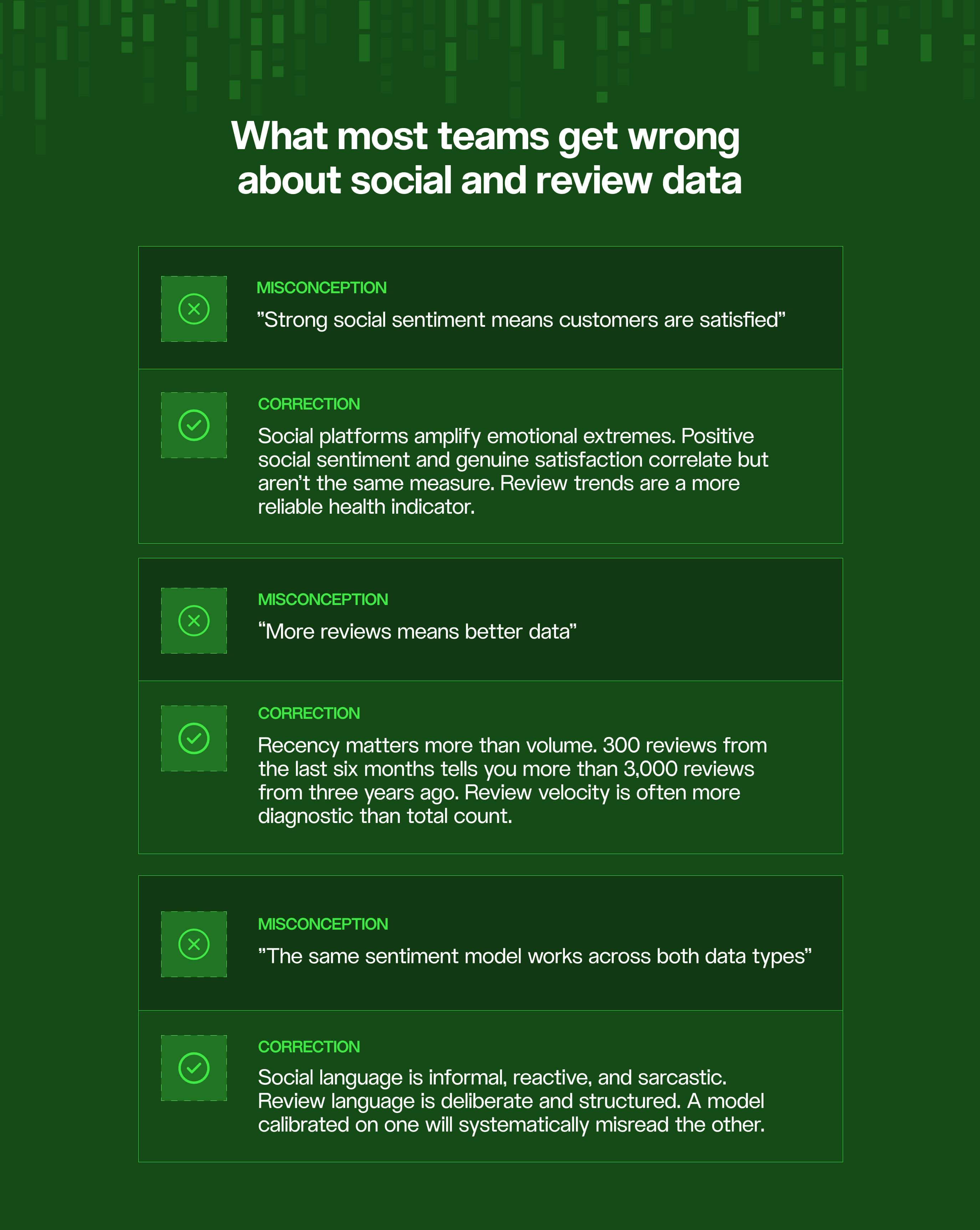

Three common misconceptions about social and review data

Misconception 1: Strong social sentiment means customers are satisfied

Social platforms algorithmically amplify emotional content, enthusiasm and outrage both. What surfaces in a social listening tool is not a representative sample of customer opinion; it's the emotionally intense end of it. A brand can generate consistently positive social content while review scores on G2 or Trustpilot quietly decline, because the customers who are calmly deciding to leave don't post about it. They write a considered review and move on. Positive social sentiment and genuine customer satisfaction correlate, but they're not the same measure.

Misconception 2: Review volume is the main indicator of data quality

A platform with 3,000 reviews from three years ago tells you less about current product experience than 300 reviews from the last six months.

Total review count matters far less than recency distribution and velocity. A sudden slowdown in new reviews can itself be a signal: either product experience has stabilised, or customers have stopped feeling enough to write. For competitive intelligence purposes, a competitor's review volume is less informative than the rate at which their recent reviews are improving or declining.

Misconception 3: The same sentiment model works across both data types

Social language is short, informal, sarcastic, and emotionally volatile. Review language is longer, considered, structured, and written for an audience of strangers.

A sentiment model calibrated on social posts – trained to read "this is sick 🔥" as positive and short negative bursts as significant – will systematically misread formal review language, and a model trained on reviews will miss the irony and cultural shorthand that makes social data interpretable.

This is one of the most common technical errors in combined social and review analysis programs, and one of the clearest arguments for treating the two as structurally different inputs rather than two versions of the same thing.

Why is combining social and review data technically difficult?

Social data and review data arrive in different formats, on different cadences, from different source types. Social is near-real-time.

Review data is asynchronous – moderated on some platforms, delayed on others, with lag that varies by source. The same brand appears differently across dozens of platforms. Sentiment calibration that works for an informal social post doesn't work the same way for a formal B2B review.

56% of marketing and insights teams cite fragmented data sources as their primary challenge, and 46% cite data quality. Both problems compound when working with two structurally different data types simultaneously.

Many social listening tools were built for volume and speed, not granular product intelligence. And review analysis tools were built for theme extraction, not real-time signal processing. Alterna CX describes the result of running them separately as "incomplete insights" – an understatement for anyone who's tried to reconcile the two manually.

💡 Most social listening tools were built for volume and speed, not granular product intelligence. For teams that want to work directly with the underlying data rather than a dashboard, headless social listening offers a different infrastructure model – one that separates data access from analysis and gives teams more control over how each source is used.

Getting value from combined social and review data means solving the data layer problem first: clean, normalised, combined access to both types before asking analytical questions of them.

At Datashake, we provide unified API access to social and review data across 150+ sources, and the teams we work with most commonly run into three specific problems when combining data types for the first time.

1️⃣ First, entity resolution: the same brand appears differently across platforms. Different spellings, abbreviations, and product names that need to resolve to a single entity before analysis is meaningful.

2️⃣ Second, temporal misalignment: social data arrives in near-real time while review data is moderated and asynchronous, creating a lead/lag relationship that needs to be accounted for rather than ignored.

3️⃣ Third, sentiment register mismatch: a model calibrated on informal social language will systematically misread formal review language, and vice versa.

Summary

Social data captures customers in the moment: reactive, emotional, unfiltered. Review data captures them after the fact – considered, specific, written for other buyers. Neither is a substitute for the other.

Social without reviews gives you signal without structure. Reviews without social give you structure without timeliness. The questions that matter most, they require both ⬇️

- How is sentiment shifting before it becomes settled opinion?

- Where are customers quietly leaving without making noise?

- What language do buyers actually use when they're deciding?

The combination starts with having access to both data types at scale, in a normalised format you can actually work with.

💡 For teams evaluating whether to build the data infrastructure in-house or work with a provider, this guide to the build vs buy decision covers the tradeoffs specific to social data pipelines.

Written by Philip Källberg Founder, Datashake · Published 04/27/2026 · Last updated 04/27/2026

Philip founded Datashake in 2018 after spending years building Reviewshake, a reputation management platform that ran on online review data – and finding there was no reliable infrastructure to get it at scale. That problem became Datashake: a unified data layer now handling millions of requests daily across social and review data for companies. This post draws on what we've seen across those integrations: how analytics teams, insight platforms, and enterprise brands actually work with combined social and review data in production.

Frequently asked questions

What is the difference between social listening and review monitoring?

Social listening tracks real-time conversations across social media platforms (posts, comments, mentions) to identify trends, sentiment shifts, and brand perception as they form. Review monitoring tracks structured customer feedback on dedicated review platforms like Google, G2, or Trustpilot, which is written after experience and intended for future buyers. Both serve different analytical purposes and are most effective when used together.

Can social data replace review data for customer insights?

Social data captures immediate emotional reactions but rarely provides the product-specific detail, source verification, or durability of review data. Reviews reflect settled customer opinion written for an audience of future buyers – a level of considered feedback social posts almost never produce. The two data types complement rather than substitute for each other.

Which data type is better for detecting customer churn?

Review data typically provides an earlier and more reliable churn signal. A declining star rating on a B2B review platform like G2 or Trustpilot often precedes cancellation by several weeks, because customers tend to write formal reviews when they've made a decision to leave but haven't yet acted on it. Social monitoring can surface complaint patterns, but review trends are generally more predictive of actual churn behaviour.

How do social and review data differ in the B2B buying process?

In B2B, review platforms like G2, TrustRadius, and Capterra play a particularly significant role in the consideration and decision stages – 70% of the B2B buying journey happens anonymously before any vendor contact, and much of that research happens on review sites. Social data is more relevant for tracking brand perception, industry conversation, and early-stage awareness among professional communities on LinkedIn and forums.

What tools combine social and review data effectively?

Most social listening platforms (Brandwatch, Sprinklr, Talkwalker) and most review analysis tools (Chattermill, Thematic, InMoment) handle one side of this well but not both. Platforms that provide unified API access to both social and review data at the infrastructure level (before downstream analysis) tend to produce more coherent combined intelligence than tools that treat the two as separate modules.

More insights you might like